AMD AI Software Solved – MI300X Pricing, Performance, PyTorch 2.0, FlashAttention, OpenAI Triton

Matching Nvidia Performance With 0 Code Changes With MosaicML

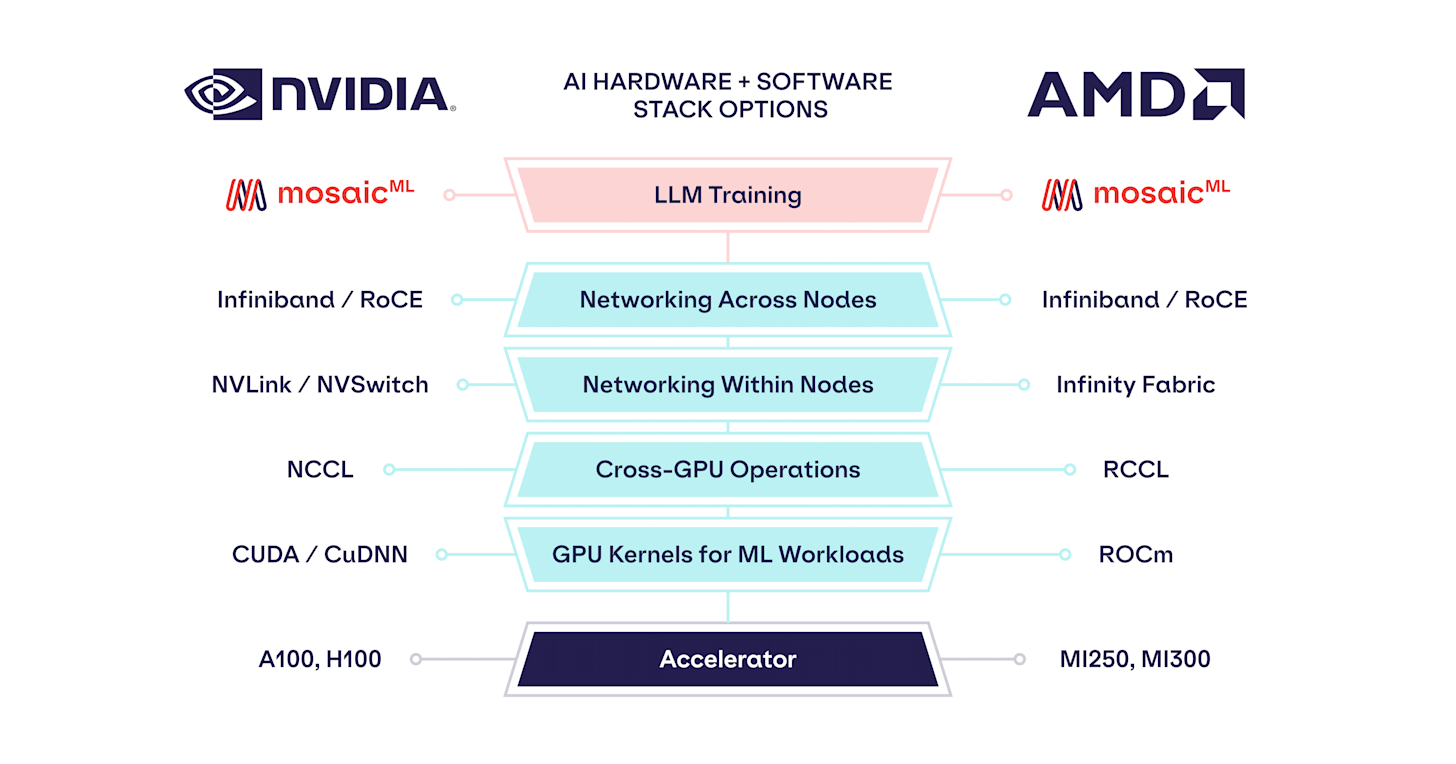

Nvidia has a massive software advantage that allows them to dominate machine learning training and charge huge markups. Meanwhile, machine learning researchers dream of a world where they can create their model in PyTorch, and not have to worry about GPU-level programming outside of calling a couple of external libraries. They want to be able to compile any arbitrary model and have it run at high performance across multiple chips.

The ultimate goal is that the researcher only needs to define the pipeline and tensor parallelism that occurs across nodes and allow low-level code generation to be left to the compiler stack. For those training fairly small language models, this is already the case with Nvidia. As models and clusters scale up, more custom CUDA kernels and hand scheduled communications exist. Every other software stack is nowhere close to offering what Nvidia’s does. Now this is all changing.

7 months ago, we described how Nvidia’s dominant moat in software for machine learning was weakening rapidly due to Meta’s PyTorch 2.0 and OpenAI’s Triton. We have also discussed the work MosaicML’s has been working on since as far back as last year. With the latest PyTorch 2.0, MosaicML Composer, and Foundry releases, AMD hardware is now just as easy to use as Nvidia hardware.

Today we want to share what’s needed, performance, and the brewing battle between AMD’s MI300X and Nvidia’s H100 in 2024. We also want to share our view on pricing for MI300X both OAM module level and cloud. This is a battle that is critical to watch as currently, Nvidia has no competition keeping them honest on pricing.

MosaicML, which was just acquired by DataBricks for $1.3 billion, has been focused on providing tools and infrastructure to make it easier and more efficient to train large language models, image generation models, and more. They take much of the difficulty out of running large language models from data preparation to training to managing infrastructure.

To date, this was mostly for Nvidia hardware. MosaicML’s stack can achieve over 70% hardware FLOPS utilization (HFU) and 53.3% model FLOPS utilization (MFU) on Nvidia’s A100 GPUs in large language models without requiring writing custom CUDA kernels. Note that Google’s stack for PaLM model on TPUv4 only achieved 57.8% HFU and 46.2% MFU. Likewise, Nvidia’s own Megatron-LM stack only achieved 52.8% HFU and 51.4% MFU on a 175B parameter model. Mosaic’s stack, much of which is open source, is an obvious choice unless every last drop needs to be squeezed out with many dedicated scaling engineers for clusters of 10,000s of GPUs.

Now, MosaicML is going to be able to offer the same with AMD hardware. They have only just gotten their Instinct MI250 GPUs this quarter, but they are already close to matching Nvidia.

We profiled training throughput of MPT models from 1B to 13B parameters and found that the per-GPU-throughput of MI250 was within 80% of the A100-40GB and within 73% of the A100-80GB.

There is no code change required.

mapping every floating point operation, every GPU command, and every distributed operation like `torch.matmul()`, `torch.cuda.current_device()`, `inputs.to(‘cuda:0’)`, `torch.dist.all_gather()`, etc. call to the appropriate ROCm and RCCL operations on the AMD system. See Figure 1 for screenshots of what this looks like. We agree, it’s funny to run `torch.cuda` on an AMD machine, but it works!

This is impressive, but remember, Mosaic just got their MI250 this quarter, while they have been playing with A100’s for years. AMD needs to get them some early samples of MI300 so they can start tuning their stack ASAP. Furthermore, we are still missing OpenAI Triton, which full support should be implemented by the end of the year. The performance gap will decrease as AMD software improves and Mosaic switches from ROCm-based to OpenAI Triton-based FlashAttention.

as the ROCm FlashAttention kernel is improved or replaced with a Triton-based one: when comparing a proxy MPT model with `n_heads=1` across systems, we see a substantial lift that brings MI250 performance within 94% of A100-40GB and 85% of A100-80GB.

Lastly, these results are for multiple-year-old GPUs. Nvidia’s A100 is a 2020 GPU, and AMD’s MI250 is a 2021 GPU. The more important thing is how this work translates over to Nvidia’s current H100 and AMD’s upcoming MI300X. We have done deep analysis on the hardware, networking, and customer level here, but let’s dive deeper into the pricing and performance discussion for MI300 and H100. This is critical as MI300 is getting cloud traction and will be available from multiple cloud providers.

Below, we will share what we believe clouds will have to charge for MI300 to make a fair margin but also pressure Nvidia and what we believe MI300X will cost. Lastly, we will also discuss some caveats and strongholds for Nvidia that keep them ahead and the upcoming H100 refresh.